[ad_1]

Introduction

Simply defined, OCR is a set of computer vision tasks that convert scanned documents and images into machine readable text. It takes images of documents, invoices and receipts, finds text in it and converts it into a format that machines can better process. You want to read information off of ID cards or read numbers on a bank cheque, OCR is what will drive your software.

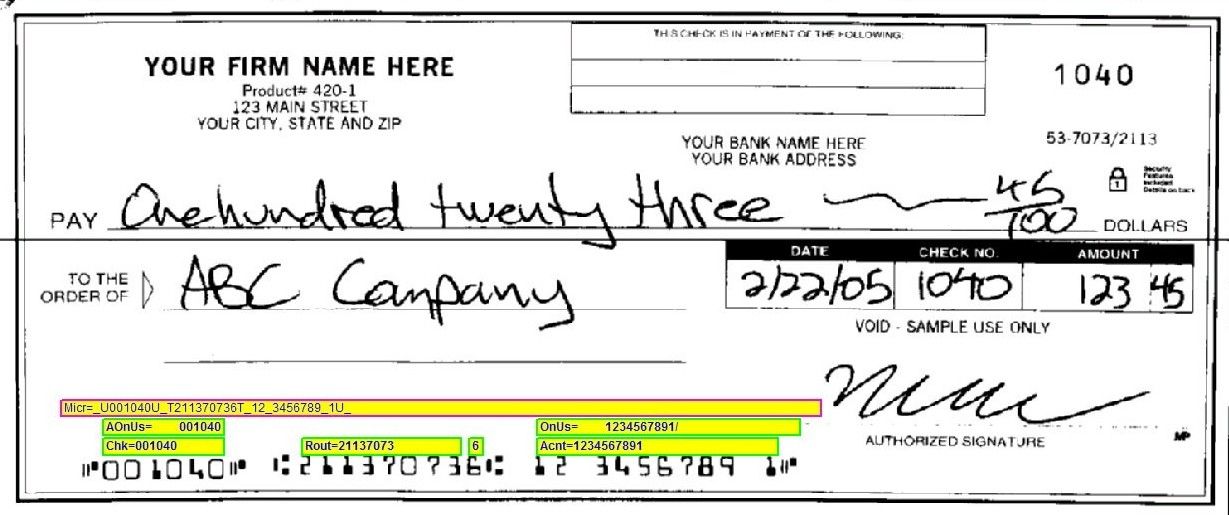

You might need to read the different characters from a cheque, extract the account number, amount, currency, date etc. But how do you know which character corresponds to which field? What if you want to extract a meter reading, how do you know what parts are the meter reading and what are the numbers printed to identify the meter?

Learning how to extract text from images or how to apply deep learning for OCR is a long process and a topic for another blog post. The focus of this one is going to be understanding where the OCR technology stands, what do OCR products offer, what is lacking and what can be done better.

Want to digitize invoices, PDFs or number plates? Head over to Nanonets and start building OCR models for free!

The OCR landscape

OCR is perceived to be a solved problem by many, but in reality, the products available to us as open-source tools or provided by technological giants are far from perfect – too rigid, often inaccurate and fail in the real world.

The APIs provided by many are limited to solving a very limited set of use cases and are averse to customizations. More often than not, a business planning to use OCR technology needs an in-house team to build on the OCR API available to them to actually apply it to their use case. The OCR technology available in the market today is mostly a partial solution to the problem.

Where the current OCR APIs fail

Product shortcomings

Don’t allow working with custom data – One of the biggest roadblocks to adopting OCR is that every use-case has its nuances and require our algorithms to deal with different kinds of data. To be able to get good results for our use-case, it is important that a model can be trained on data that we’ll be dealing with the most. This is not a possibility with the OCR APIs available to us. Consider any task involving OCR in the wild, reading traffic signs or reading shipping container numbers, for example. Current OCR APIs do not allow for vertical reading which makes the detection task in the image above a lot harder. These use cases need you to specifically get bounding boxes for characters in the images you will be most dealing with.

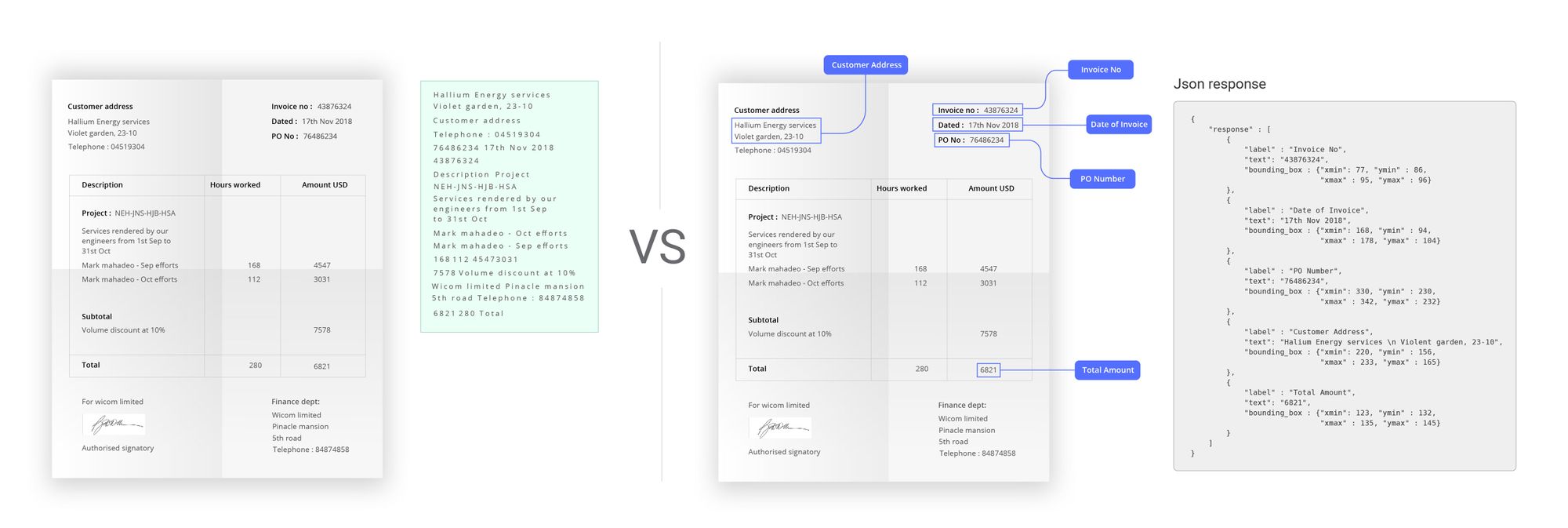

Require a considerable amount of post-processing – All the OCR APIs currently extract text from given images. It is up to us to build on top of the output so it can be useful for our organization. Getting text out of an invoice does no good. If you need to use it, you’ll have to build a layer of ocr software on top of it that allows you to extract dates, company names, amount, product details, etc. The path to such an end to end product can be filled with roadblocks due to inconsistencies in the input images and lack of organization in the extracted text. To be able to get meaningful results, the text extracted from the OCR models needs to be intelligently structured and loaded in a usable format. This could mean that you need an in-house team of developers to use existing OCR APIs to build software that your organization can use.

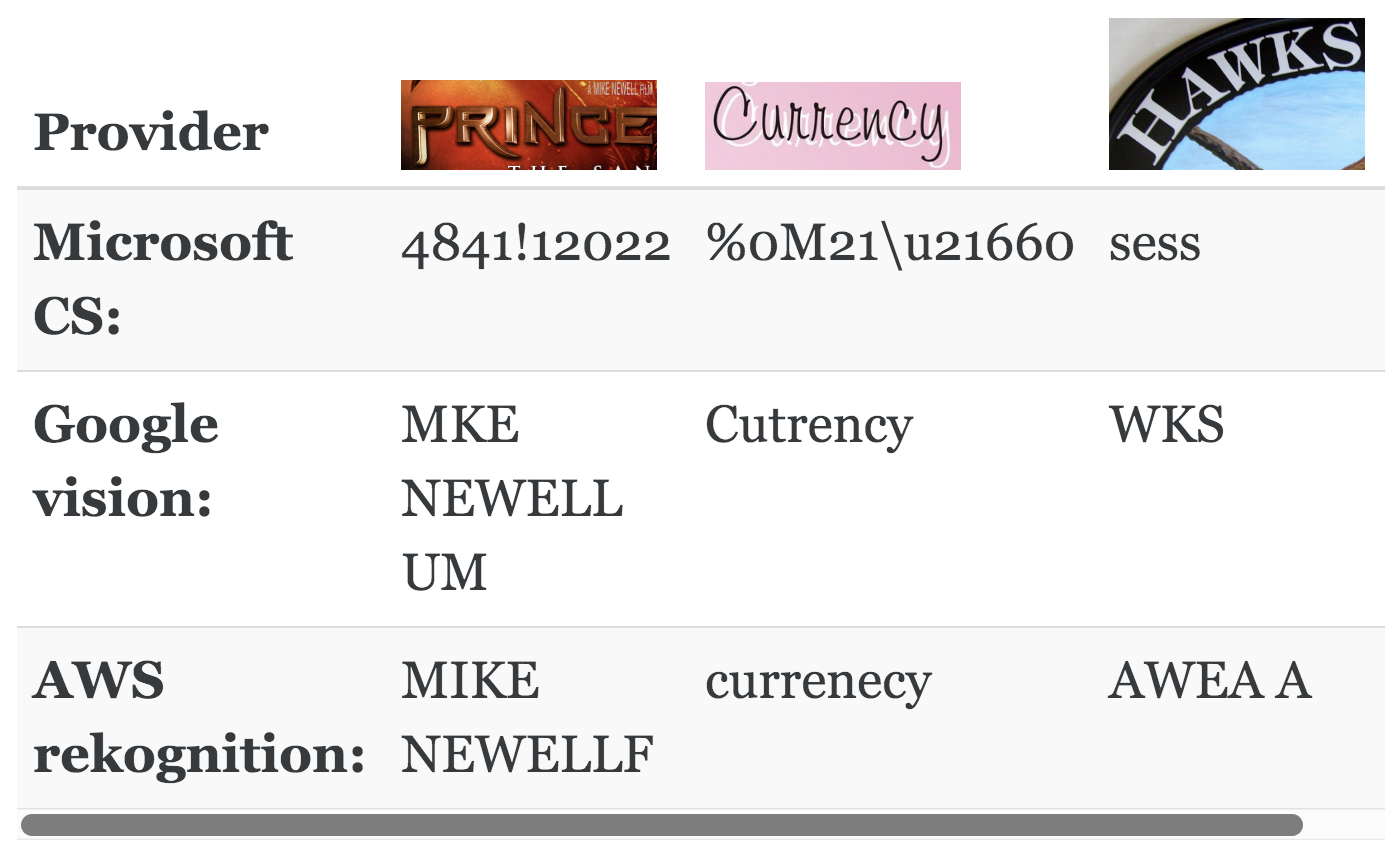

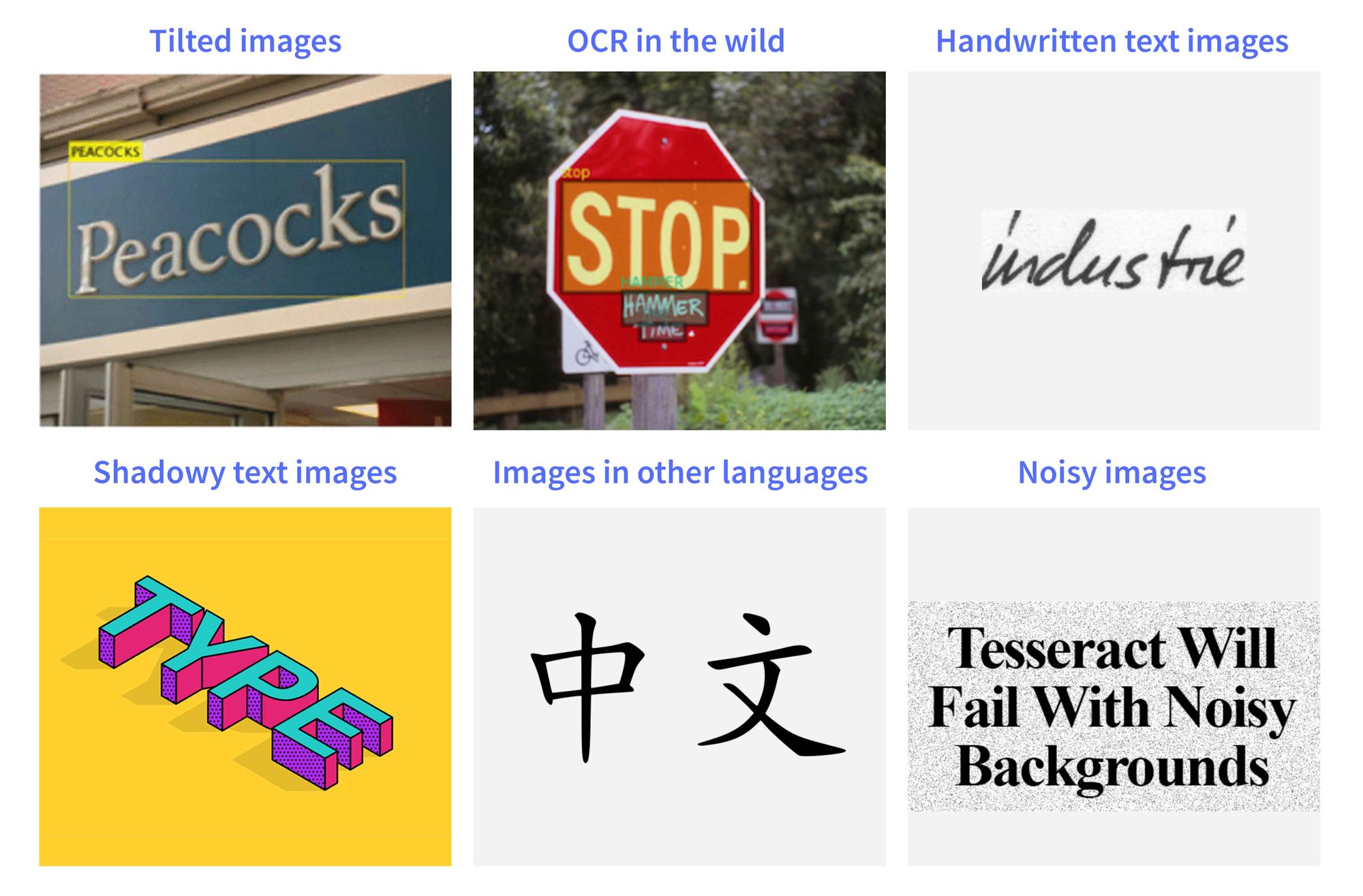

Work well only in specific constraints – Current OCR methods perform well on scanned documents with digital text. On the other hand, handwritten documents, images of text in multiple languages at once, images with low resolution, images with new fonts and varying font sizes or images with shadowy text, etc can make cause your OCR model to make a lot of errors and leave you with poor accuracy. Rigid models that are averse to customization limit the scope of applications for the technology where they can perform with at least reasonable effectiveness.

Technological barriers

Tilted text in images – While current research suggests that object detection should be able to work with rotated images by training them on augmented data, it is surprising to find that none of the OCR tools available in the market actually adopt object detection in their pipeline. This has several drawbacks, one of which is that your OCR model won’t pick up the characters and words that are tilted. Take, for example, reading number plates. A camera attached to street light will capture a moving car on a different angle, depending on the distance and the direction of the car. In such cases, the text will appear to be tilted. Better accuracy might mean stronger traffic law enforcement and a decrease in the rate of accidents.

OCR in natural scenes – OCR has historically evolved to deal with documents and though much of our documentation and paperwork happens with computers these days, there still are several use cases that require us to be able to process images taken in a variety of settings. One such example is reading shipping container numbers. Classical approaches tend to find the first character and go in a horizontal line looking for characters that follow. This approach is useless when trying to run OCR on images in the wild. These images can be blurry and noisy. The text in them can be at a variety of locations, the font might be something your OCR model hasn’t seen before, the text can be tilted, etc.

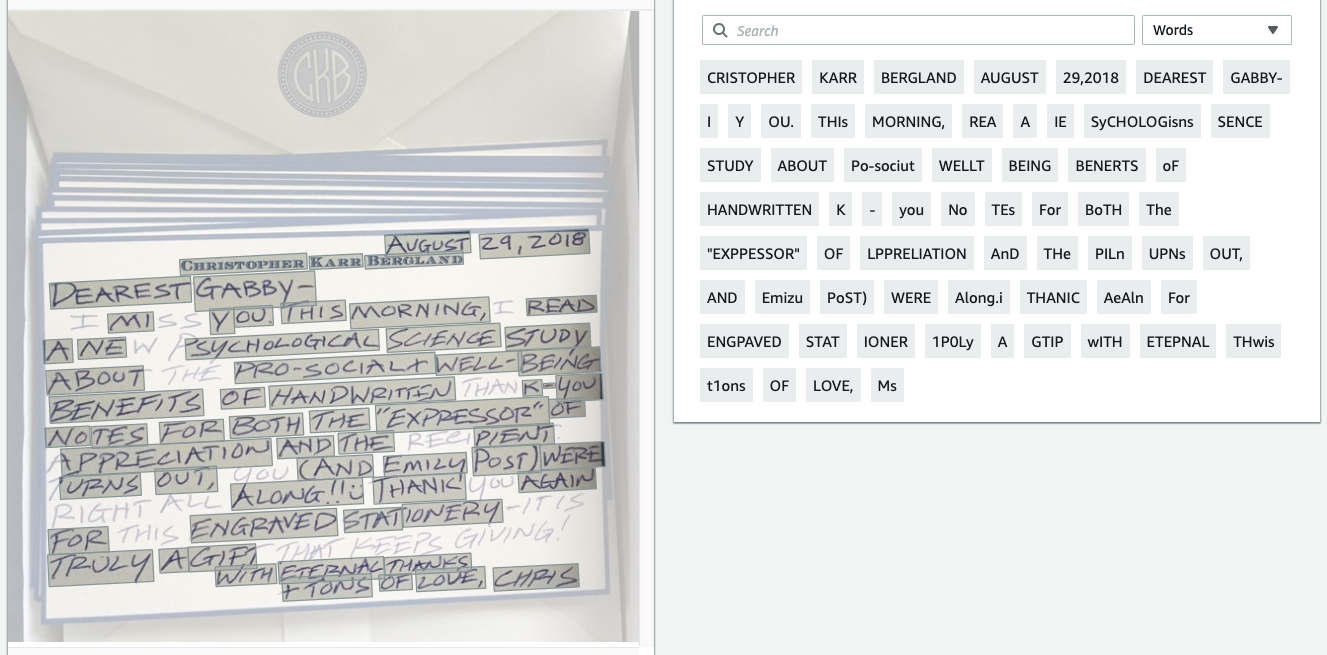

Handwritten text, cursive fonts, font sizes – The OCR annotation process requires you to identify each character as a separate bounding box and models trained to work on such data get thrown off when they are faced with handwritten text or cursive fonts. This is because a gap between any two characters makes it easy to separate one from another. These gaps don’t exist for cursive fonts. Without these gaps, the OCR model thinks that all the characters that are connected are actually one single pattern that doesn’t fit into any of the character descriptions in its vocabulary. These issues can be addressed by powering your OCR engine with deep learning.

Text in languages other than English – OCR models provided by Google and Microsoft work well on English but do not perform well with other languages. This is mostly due to the lack of enough training data and varying syntactical rules for different languages. Any platform or company that intends to use OCR for data in their native languages will have to struggle with bad models and inaccurate results. It is possible that you might want to analyze documents that contain multiple languages at once, like forms to deal with government processes. Working with such cases is not possible with the available OCR APIs.

Noisy/blurry images – Noisy images can very often throw off your classifier to generate wrong results. A blurry image can confuse your OCR model between ‘8’ and ‘B’ or ‘A’ and ‘4’. De-noising images is an active area of research and is being actively studied in the fields of deep learning, computer vision. Making models that are robust to noise can go a long way in creating a generalized approach to character recognition and image classification and understanding de-noising and applying it in character recognition tasks can improve accuracy to a great extent.

Should I even consider using OCR then?

Short answer is Yes.

Anywhere there is a lot of paperwork or manual effort involved, OCR technology can enable image and text based process automation. Being able to digitize information in an accurate way can help business processes become smoother, easier and a lot more reliable along with reducing the manpower required to execute these processes. For big organizations who have to deal with a lot of forms, invoices, receipts, etc, being able to digitize all the information, storing and structuring the data, making it searchable and editable is a step closer to a paper-free world.

Think about the following use cases.

Number plates – number plate detection can be used to implement traffic rules, track cars in your taxi service parking, enhance security in public spaces, corporate buildings, malls, etc.

Legal documents – Dealing with different forms of documents – affidavits, judgments, filings, etc. digitizing, databasing and making them searchable.

Table extraction – Automatically detect tables in a document, get text in each cell, column headings for research, data entry, data collection, etc.

Banking – analyzing cheques, reading and updating passbooks, ensuring KYC compliance, analyzing applications for loans, accounts and other services.

Menu digitization – extracting information from menus of different restaurants and putting them into a homogeneous template for food delivery apps like swiggy, zomato, uber eats, etc.

Healthcare – have patients medical records, history of illnesses, diagnoses, medication, etc digitized and made searchable for the convenience of doctors.

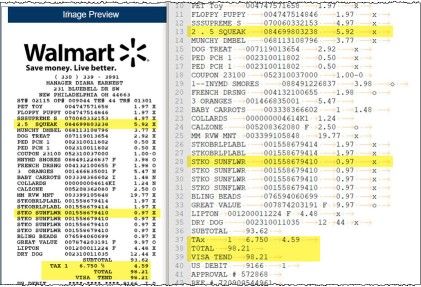

Invoices – automating reading bills, invoices and receipts, extracting products, prices, date-time data, company/service name for retail and logistics industry.

Automating business processes has proven to be a boon for organizations. It has helped them become more transparent, making communication and coordination between different teams easier, increased business throughput, increased employee retention rates, enhanced customer service and delivery, increased employee productivity, and performance. Automation has helped speed up business processes while simultaneously cut costs. It has made processes less chaotic, more reliable and helped increase employee morale. Moving towards digitization is a must to stay competitive in today’s world.

What do these OCR API need?

OCR has a lot of potentials but most products available today do not make it easier for businesses to adopt the technology. What OCR does is convert images with text or scanned documents into machine-readable text. What to do with the text is left upto the people using these OCR technologies, which might seem like a good thing at first. This allows people to customize the text they are working with as they want, given they’re ready to spend resources required to make it happen. But beyond a few use cases like scanned document reading and analyzing invoices and receipts, these technologies fail to make their case for widespread adoption.

A good OCR product would improve on the following fronts.

How it deals with the images coming in

- Does it minimize the pre-processing required?

- Can the annotation process be made easier?

- How many formats does it accept our images in?

- Do we lose information while pre-processing?

How it performs in real-world problems

- How is the accuracy?

- Does it perform well in any language?

- What about difficult cases like tilted text, OCR in the wild, handwritten text?

- Is it possible to constantly improve your models?

- How does it fare against other OCR tools and APIs?

How it uses the machine-readable text

- Does it allow us to give it a structure?

- Does it make iterating over the structure easier?

- Can I choose the information I want to keep and discard the rest?

- Does it make storage, editing and search easier?

- Does it make data analysis easier?

Nanonets and OCR

We at Nanonets have worked to build just the kind of product that solves these problems. We have been able to productize a pipeline for OCR by working with it as not just character recognition but as an object detection and classification task.

But the benefits of using Nanonets over other OCR APIs go beyond just better accuracy. Here are a few reasons you should consider using the Nanonets OCR API.

Automated intelligent structured field extraction – Assume you want to analyze receipts in your organization to automate reimbursements. You have an app where people can upload their receipts. This app needs to read the text in those receipts, extract data and put them into a table with columns like transaction ID, date, time, service availed, the price paid, etc. This information is updated constantly in a database that calculates the total reimbursement for each employee at the end of each month. Nanonets makes it easy to extract text, structure the relevant data into the fields required and discard the irrelevant data extracted from the image.

Works well with several languages – If you are a company that deals with data that isn’t in English, you probably already feel like you have wasted your time looking for OCR APIs that would actually deliver what they promise. We can provide an automated end to end pipeline specific to your use case by allowing custom training and varying vocabulary of our models to suit your needs.

Performs well on text in the wild – Reading street signs to help with navigation in remote areas, reading shipping container numbers to keep track of your materials, reading number plates for traffic safety are just some of the use cases that involve images in the wild. Nanonets utilizes object detection methods to improve searching for text in an image as well as classifying them even in images with varying contrast levels, font sizes, and angles.

Train on your own data to make it work for your use-case – Get rid of the rigidity your previous OCR services forced your workflow into. You won’t have to think of what is possible with this technology. With Nanonets, you will be able to focus on finding the way to make the best out of it for your business. Being able to use your own data for training broadens the scope of applications, like working with multiple languages at once, as well as enhances your model performance due to test data being a lot more similar to training data.

Continuous learning – Imagine you are expanding your transportation service to a new state. You are faced with the risk of your model becoming obsolete in the future due to the new language your truck number plates are in. Or maybe you have a video platform that needs to moderate explicit text in videos and images. With new content, you are faced in with more edge cases where the model’s predictions are not very confident or in some cases, false. To overcome such roadblocks, Nanonets OCR API allows you to re-train your models with new data with ease, so you can automate your operations anywhere faster.

No in-house team of developers required – No need to worry about hiring developers and acquiring talent to personalize the technology for your business requirements. Nanonets will take care of your requirements, starting from the business logic to an end to end product deployed that can be integrated easily into your business workflow without worrying about the infrastructure requirements.

OCR with Nanonets

The Nanonets OCR API allows you to build OCR models with ease. You can upload your data, annotate it, set the model to train and wait for getting predictions through a browser based UI.

1. Using a GUI: https://app.nanonets.com/

You can also use the Nanonets-OCR- API by following the steps below:

2. Using NanoNets API: https://github.com/NanoNets/nanonets-ocr-sample-python

Below, we will give you a step-by-step guide to training your own model using the Nanonets API, in 9 simple steps.

Step 1: Clone the Repo

git clone https://github.com/NanoNets/nanonets-ocr-sample-python

cd nanonets-ocr-sample-python

sudo pip install requests

sudo pip install tqdmStep 2: Get your free API Key

Get your free API Key from https://app.nanonets.com/#/keys

Step 3: Set the API key as an Environment Variable

export NANONETS_API_KEY=YOUR_API_KEY_GOES_HERE

Step 4: Create a New Model

python ./code/create-model.py

Note: This generates a MODEL_ID that you need for the next step

Step 5: Add Model Id as Environment Variable

export NANONETS_MODEL_ID=YOUR_MODEL_ID

Step 6: Upload the Training Data

Collect the images of object you want to detect. Once you have dataset ready in folder images (image files), start uploading the dataset.

python ./code/upload-training.py

Step 7: Train Model

Once the Images have been uploaded, begin training the Model

python ./code/train-model.py

Step 8: Get Model State

The model takes ~30 minutes to train. You will get an email once the model is trained. In the meanwhile you check the state of the model

watch -n 100 python ./code/model-state.py

Step 9: Make Prediction

Once the model is trained. You can make predictions using the model

python ./code/prediction.py PATH_TO_YOUR_IMAGE.jpg

Conclusion

While OCR is a widely studied problem, it is generally a research field that had stagnated until deep learning approaches came to the fore to drive the research in the field. While many OCR products available today have progressed to applying deep learning based approaches to OCR, there is a dearth of products that actually make the OCR process easier for a user, a business or any other organisation.

Lazy to code, don’t want to spend on GPUs? Head over to Nanonets and build computer vision models for free!

Further Reading

Update:

Added more reading material about the importance of Character Recognition in Information Extraction.

[ad_2]

Source link